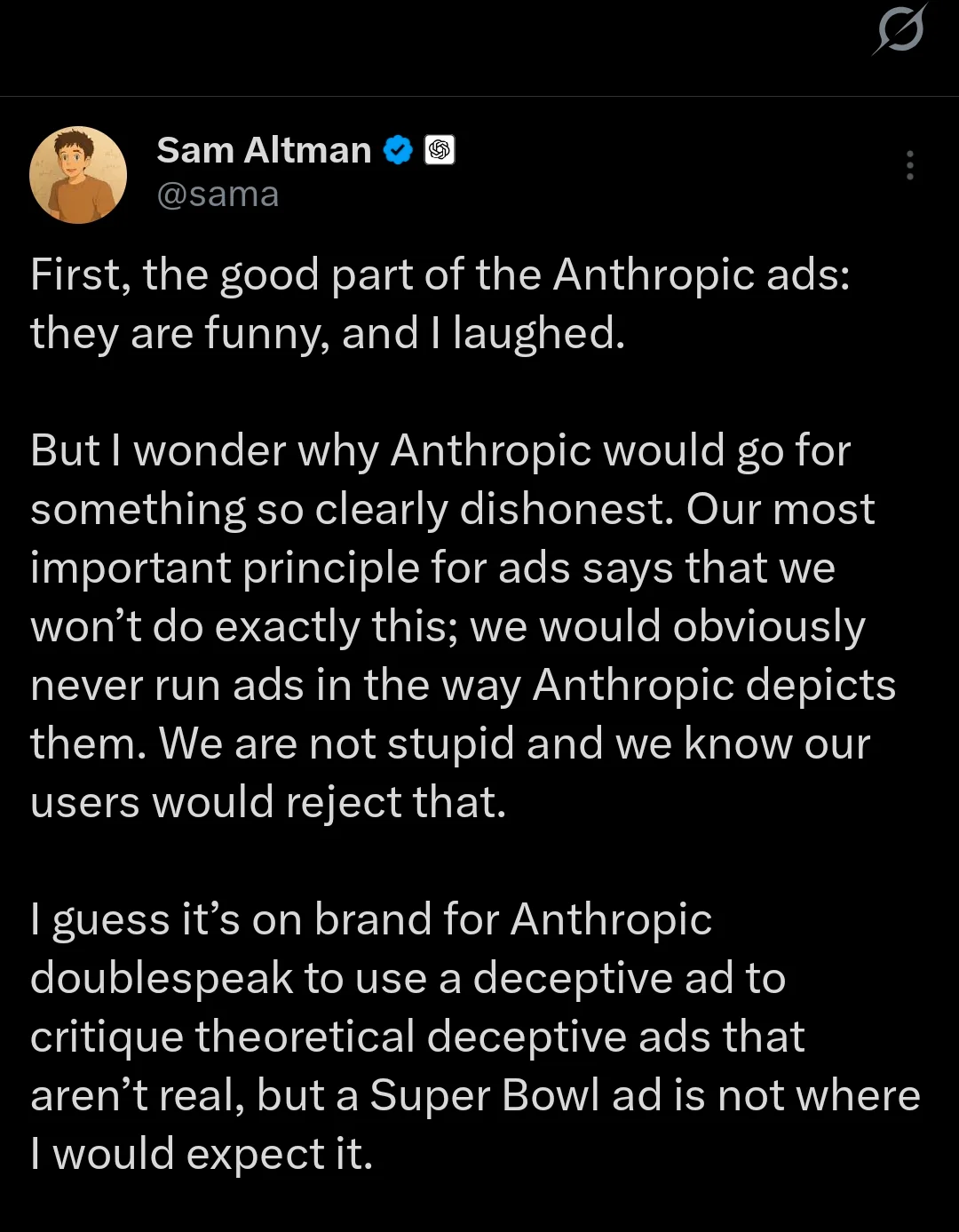

After Anthropic’s multimillion-dollar Super Bowl ads and Sam Altman’s public criticism, the real issue emerges: OpenAI has a structural cost problem—not from weak demand, but from massive demand.

ChatGPT now runs at roughly 800 million weekly active users. AI is not SaaS; every prompt requires real-time computation. More usage equals more GPUs, more energy, more data centers.

Between 2023 and 2025, revenue grew ~10×, compute scaled similarly, and power consumption hit ~2 gigawatts. Even with $20B+ annualized revenue, internal projections show burn accelerating toward $35B per year by 2027.

That isn’t mismanagement—it’s compute economics. Alphabet just guided $175–185B in total capex for 2026, most aimed at AI infrastructure. Google sets the benchmark for what hyperscale AI spending looks like.

So how does a standalone AI lab without search, ads, Android, or Workspace balance cost and revenue? Most ChatGPT users are free. They generate cost, not revenue. Without a third revenue stream beyond subscriptions and APIs, the math breaks.

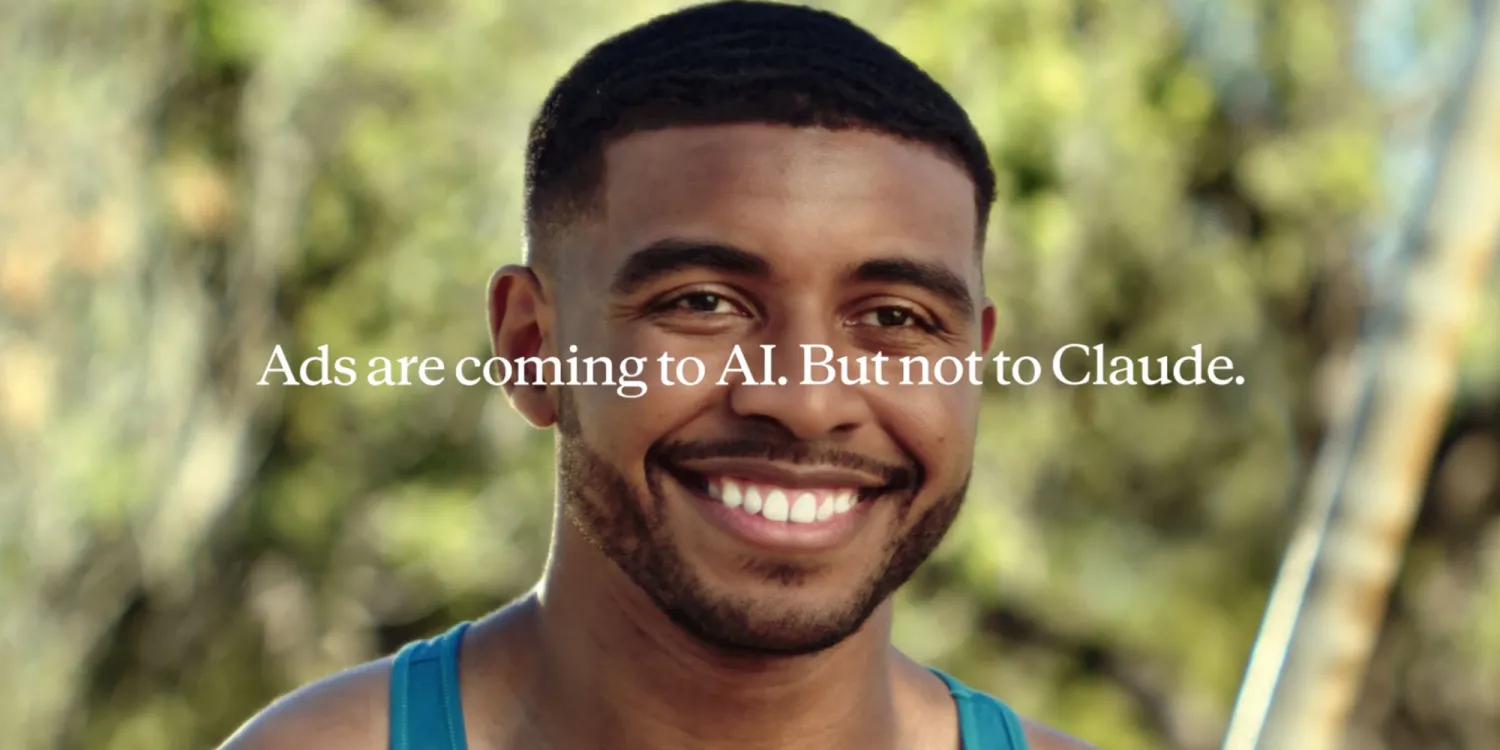

That’s why ads are back on the table. Not ideology—marginal cost math. As models commoditize, small price or quality gaps drive churn. There’s no strong lock-in.

This is why the IPO narrative keeps resurfacing. Running a global, free, always-on AI system costs tens of billions every year. Someone must finance it.

So who pays to run AI at planetary scale? Either someone subsidizes it, the user pays, or ads finance it. Everything else is story.